Brought to you by S2N Navigator, a research and performance analysis platform for traders who care about truth. It connects backtests, live trades, and portfolio data into a single workflow that highlights bias, overfitting, and fragile strategies before they cost real money. It also runs a number of AI Agents focused on research where I write a building in public newsletter.

{{active_subscriber_count}} active subscribers.

There are certain times when it is better to keep quiet or say little when the world is in the grip of a war and you are far from the critical information that is going to lead to next steps.

It hasn’t stopped the dozens of market commentators I follow. I get it, for some noise is their business. At Signal2Noise I will speak when I have something to say that is more than just a gut feel, perceived or otherwise.

My prayers and good wishes to the USA and Israel and those who have been subjugated in Iran by an oppressive regime.

S2N Spotlight

I really wasn’t going to write about this; it actually wrote itself. Not in the AI sense, rather in the sense of my intuition under the sway of my subconscious.

I think everyone reading will gain a bit more perspective about the business economics of AI, a subject that has infiltrated our lives and, in some cases like mine, our minds. But one that seldom talks about the economics.

I will start gently and build from there.

Last week Pope Leo asked priests to stop using artificial intelligence to write their sermons.

It appears even the divine has limits to productivity gains.

Sam Altman pulled off one of the most impressive capital-raising feats known to man. Last Friday he signed off on a record $110 billion raise at a $730 billion valuation. If you don’t know how big that is, the rough estimate for the whole of 2025 was $470 billion for the entire VC industry. One company raising almost a quarter of annual global venture capital tells you something about where capital believes the future lies.

While I am speaking about AI at this level, I have to bring in that around the same time the deal was announced with OpenAI, Dario Amodei, the CEO of Anthropic, stood his ground with the US government’s Pentagon and refused to amend the company’s safeguard rules. The Pentagon wanted the ability for full, unfettered countrywide surveillance and also fully autonomous weaponry. In other words, giving the kill switch to the bots.

I have to take my hat off to Dario and his board for their principles. This is a man who, together with his sister, walked away from top decision-making roles at OpenAI because of their fear of the company abusing or ignoring guardrails. They set up Anthropic with other leading scientists based on their shared ethical values.

One thing I have learned over the years is if you make an ethical or moral stand, you will be confronted at some stage with a test of your resolve. It seems like they have stood their ground at considerable cost in the short term. They lost the US government as a client. Their very financial existence is currently on a knife edge and is dependent on a large capital raise. I think the scales will tip their way in the medium to long term.

Now for the point I was originally trying to make, after a 600-word intro.

When you use your ChatGPT or Claude chatbot, you use the energy displayed in the table below. All the tables have been produced by Claude under my prompts, so I'm not sure if these days we need to give a hat tip to the source 🙂. Based on all the media hype about how much energy AI is using, it turns out a typical AI user is using about the same amount of energy as a person using an LED lightbulb for 3 hours a day.

Clearly the argument for energy shortages has to be a little more nuanced.

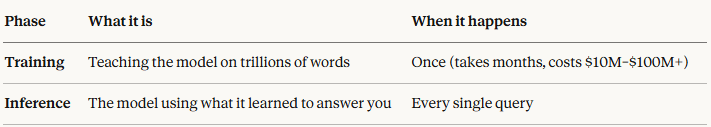

The big energy expense is happening at the training stage. If you are not yet familiar with the term 'inference', it is very important that you understand it now. It is basically the smartness of the AI to “learn” from all the data it has been trained on. More on this a little later.

Let me show you what the economics of an AI user are.

What this means in plain English is that on a simple query, Anthropic might charge you $0.002–0.01 in API costs, but their actual compute cost is probably $0.0003–0.001, a 3–10x gross margin on the compute itself.

Before you get too excited, that does not include the training and operation costs. I have already gone into quite a lot of detail, so let me bring it home without delving more into the costs.

The AI companies and their investors are betting on inference improvements. Inference is simply the act of the model generating a response. As opposed to training, which is teaching the model in the first place.

This is where I want to share some of my own thoughts on the matter.

The data centre developers and financiers want you to believe that there is an insatiable amount of energy needed. I actually don’t believe it.

Much of the energy debate ignores substitution effects. Global air conditioning alone consumes multiples of current AI inference demand. The constraint may not be physics but allocation of discomfort.

On a more intellectual note we are already at the point where almost every bit of known data has been digitised and become part of training data. Not all but nearly all. So much so that one of the biggest sub-industries is synthesising data, i.e., making stuff up that seems real. I don’t know about you, but I am a first-derivative kind of guy when it comes to my information. I don’t even allow Monte Carlo into my forecasting.

What I am trying to say in a somewhat robotic way is that I believe we are getting closer and closer to much more intuitive models, which means inference improvements.

There's a credible school of thought that a genuine architectural breakthrough, something beyond the current transformer architecture, could do what transistor miniaturisation did for computing. The current models are essentially very sophisticated pattern matchers. If someone cracks a more efficient reasoning architecture, training costs collapse. Inference becomes commoditised. The energy debate fades into irrelevance.

My final concluding thoughts.

Whenever I travelled internationally, I used to watch a series called Silicon Valley. I don’t think I watched all the seasons, but the theme throughout the seasons I was watching was about solving compression technology. The internet was taking off like a rocket; everyone’s hard drives were filling up too quickly, and digital photography was becoming an insatiable consumer of digital space. I cannot remember when last the subject of storage has been an issue. Mind you, I get regular calls from my mom, who is determined to fit her digital life into her iCloud free allowance of 5 GB.

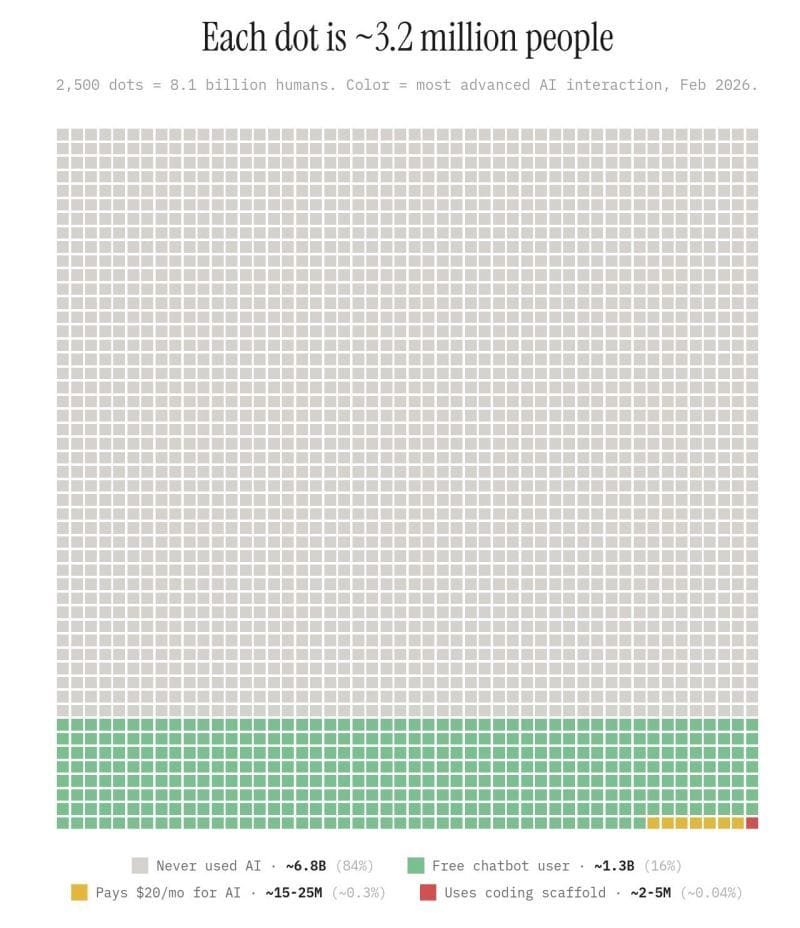

This too will pass, and AI will become even more economical for the users and the suppliers. I came across this infographic last week, and it blew my mind. As an early adopter, I thought the world were using AI like I am, but we are really at the very early stage of mass adoption.

S2N Observations

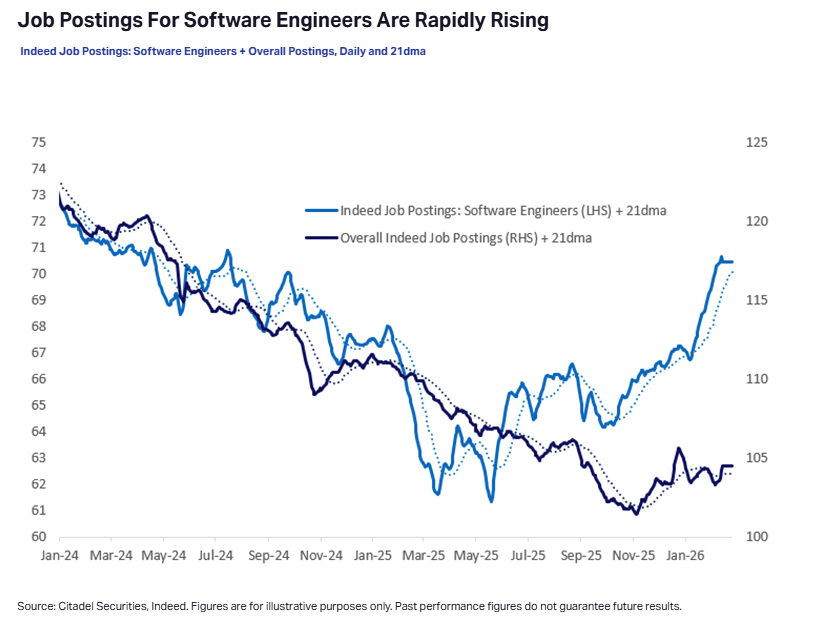

Citadel Securities put out a memo as a rebuttal to a newsletter post that went viral about the end of jobs in 2028. This was one of their charts. I am more in the camp of massive job losses but shifted a little in my last letter with some jobs being replaced by fact-checkers and defence against bad actors. I don’t see only doom, but I do see a deep valley to cross. Hopefully not the valley of death; I only use that term because I am currently trying to cross the chasm in a software startup.

This is the chart I was going to do a whole spotlight on.

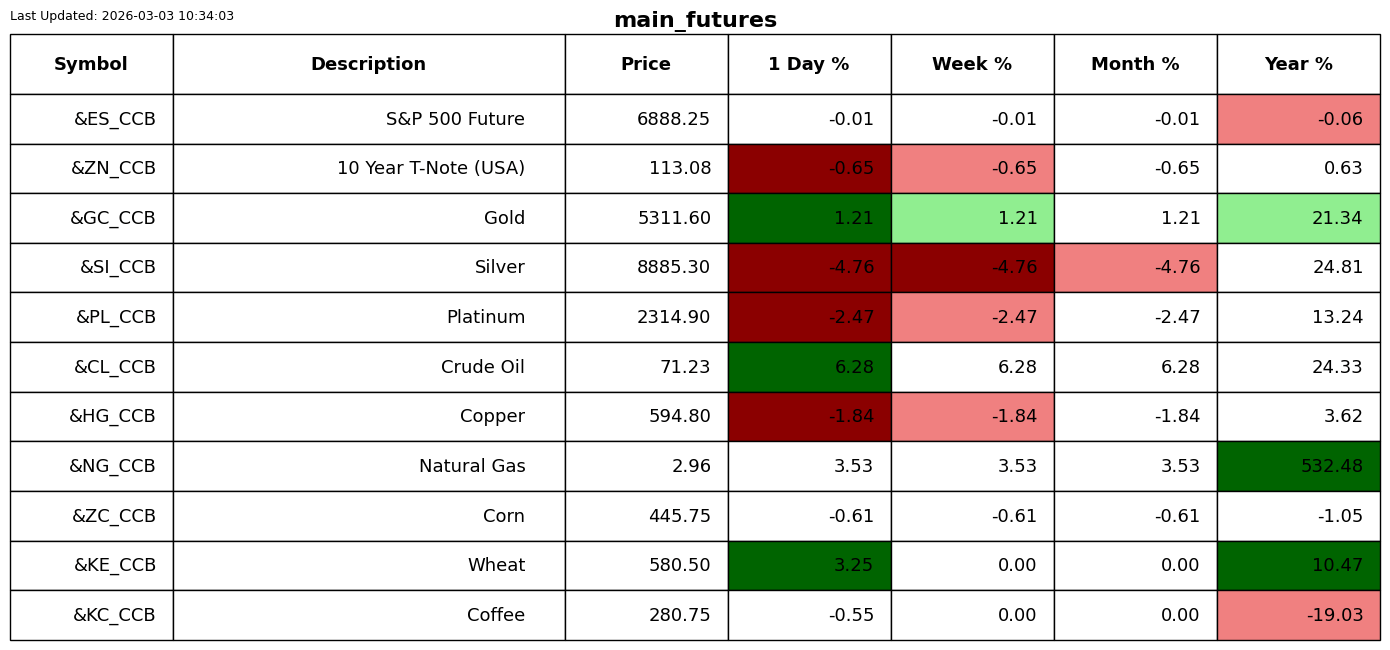

Everyone is talking about oil going through the roof due to the war. I thought I would just build a model that looks at history going back to 1983.

My condition is what happens over the next 6 months when crude oil exceeds 20% over a 30-day period. We triggered the latest event yesterday, the 2nd of February 2026. When this trigger fires, the average for the next 6 months is an increase of 59%.

The impact of oil price shocks on the S&P 500 over the next 6 months is an increase of 8%.

For me what weakens the argument is that there are no events of this nature before 2020. That is suspicious. I am out of time for today, so I am not going to explore further.

One more for the ditch.

As a certified contrarian the short interest in technology is just screaming for a bear squeeze.

S2N Screener Alert

My screener is resting today

If someone forwarded you this email, you can subscribe for free.

Please forward it to friends if you think they will enjoy it. Thank you.

S2N Performance Review

S2N Chart Gallery

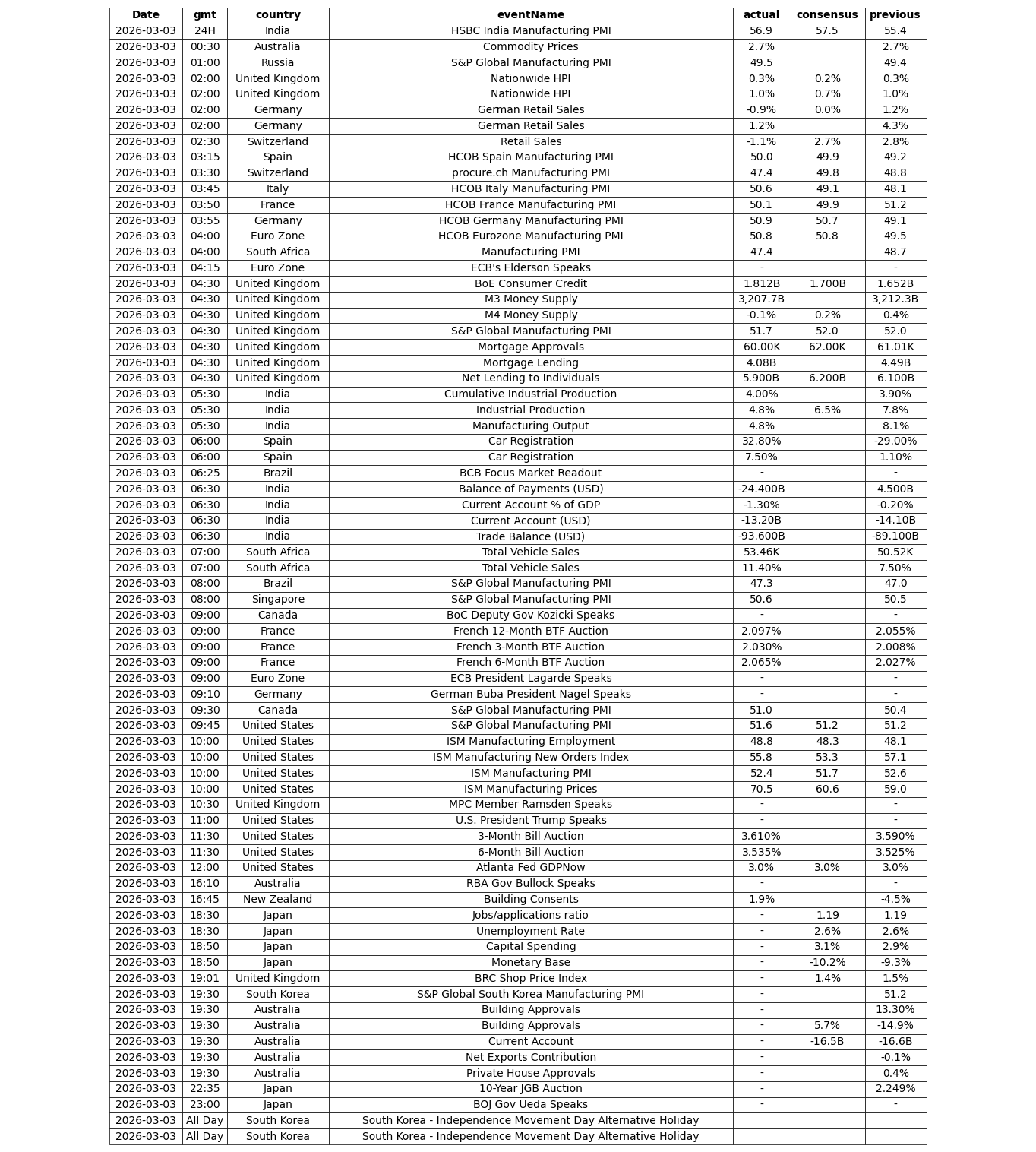

S2N News Today

. Let's